Designing AI Products: From ML Models to Human Experiences

2023 - Present

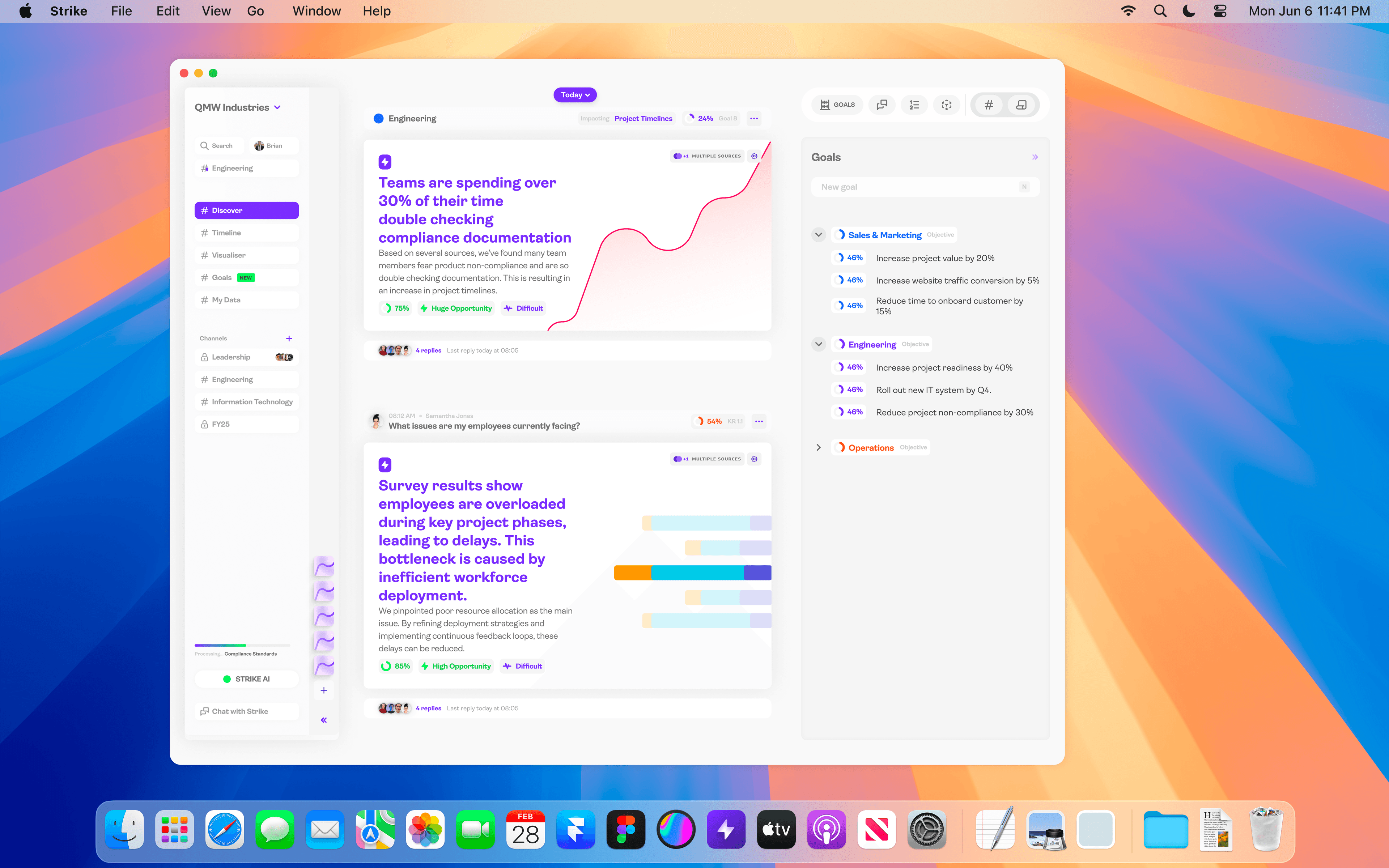

A process case study showing how I approach AI product design, translating complex ML models into experiences that real users trust and adopt. Drawing from work across Pathfindr (4.5M users, insurance), a major insurance platform (NDA, AI agents), QMW Industries (safety-critical), Emesent (mining/defence), and Strike Analytics (data analytics).

Why AI Product Design Is Different

Most product design assumes deterministic systems, press a button, get a predictable result. AI products are probabilistic. Outputs vary, confidence levels fluctuate, and the system's behaviour evolves over time. Designing for this requires a fundamentally different approach to trust, transparency, and user control. I've shipped AI products across insurance, heavy industry, healthcare, mining, and data analytics. Every one required solving the same core challenge: making algorithmic decisions feel trustworthy without hiding how they work.

Classical vs AI-Augmented Process

My design process operates at two speeds. The classical process grounds every engagement in rigorous research and validation. The AI-augmented process accelerates each phase using pattern detection, automated testing, and continuous deployment. The key difference is where human judgement is most valuable, in AI product design, the designer's role shifts from creating solutions to curating and validating AI-generated possibilities.

- Classical: Research > Define > Ideate > Prototype > Ship

- AI-Augmented: Signal Detection > Data-Informed Hypothesis > Rapid AI-Assisted Prototype > Automated Validation > Continuous Deploy

- The designer's role shifts from solution creator to AI curator and validator

- Human judgement focuses on ethical guardrails, edge cases, and trust calibration

AI Transparency Principles

At Pathfindr, I established design guidelines for AI transparency in a financial services context (SOC 2 Type II, ISO 27001). The core principle: users must always understand when they're interacting with AI versus humans, and they must be able to override AI decisions at any point.

- Clear visual indicators when AI is driving the experience versus human agents

- Confidence indicators showing how certain the system is about recommendations

- Override mechanisms allowing users to reject AI suggestions and provide feedback

- Escalation paths to human agents when AI confidence drops below threshold

- Audit trails for regulated industries showing how AI reached each decision

Personalisation Frameworks

For Pathfindr's insurance marketplace serving 4.5M+ users, I created frameworks for ML-driven personalisation across 6 distinct customer mindsets, from 'show me everything' to 'just give me the best deal.' Each mindset required different information density, different trust signals, and different decision support. The challenge was building a single system flexible enough to adapt in real-time while maintaining consistency in regulated financial services.

- 6 customer mindsets mapped through user research and behavioural data

- Real-time interface adaptation based on user interaction patterns

- Progressive disclosure calibrated to each mindset's information appetite

- Consistent compliance and trust signals across all personalisation variants

Human-AI Handoff Design

The most critical design pattern in AI products is the handoff threshold, when should AI step back for a human agent? For a major insurance comparison platform (NDA), I built scalable design patterns for this across insurance, energy, and financial comparison workflows. The AI handles routine comparisons and recommendations, but needs to recognise emotional signals, edge cases, and regulatory requirements that demand human judgement. Designing these thresholds requires understanding both the AI's capabilities and its failure modes.

Safety-Critical AI Design

At QMW Industries (heavy industry, ISO 9001:2015), I'm architecting an AI decision-support system for safety-critical operational decisions. The design constraints are completely different from consumer AI, every recommendation carries physical safety implications. The system must lookup, transform, and inform on operational decisions under strict compliance requirements. Zero tolerance for ambiguity in the interface.

- Decision-support framing (AI informs, humans decide) for safety-critical operations

- Clear distinction between AI recommendations and verified operational data

- Compliance audit integration ensuring every AI-informed decision is traceable

- Fail-safe patterns when AI confidence is insufficient for safety-critical contexts

Prototyping AI Experiences

I rapid prototype AI experiences using Python and React to test feasibility before committing engineering resources. At Pathfindr, this meant building functional prototypes that simulated ML-driven personalisation using real data, allowing us to validate the experience design before the models were production-ready. At Emesent, I'm building an AI customer insights system that synthesises 3,000+ data points, designing the queryable interface alongside the underlying data architecture.

Results Across AI Projects

The common thread across all these projects: AI products that ship to production in regulated industries, not prototypes that sit in a demo.

- 60% reduction in self-serve onboarding drop-off through AI-driven progressive disclosure (Pathfindr)

- 96% reduction in insight generation time through ML framework design (Strike)

- AI transparency guidelines adopted across client portfolio (Pathfindr)

- Safety-critical AI decision-support under ISO 9001:2015 compliance (QMW)

- 3,000+ data points synthesised through AI customer insights system (Emesent)

- Adaptive AI agent system translating complex business rules for 4.5M users (insurance platform, NDA)

What I've Learned About AI Design

The biggest misconception in AI product design is that the hard part is the algorithm. It's not. The hard part is designing the trust layer, helping users calibrate their confidence in AI outputs, providing meaningful control without overwhelming them, and maintaining transparency in systems that are inherently probabilistic. The companies that succeed with AI products are those that treat the human experience layer with the same rigour as the model architecture.