Emesent: Nine Problems, Two Designers, One Public Beta

2025 - Present

Emesent builds autonomous mapping and LiDAR technology for underground mines, defence operations, and infrastructure inspection — environments that are GPS-denied, dangerous, and inaccessible to humans. I joined as the first product design lead and walked into nine structural problems: no design leadership, no design system, no user research, a 12-month learning curve, and a 122-page user manual. With two designers and a June 1st public beta deadline, I built the research, design, and iteration foundations that unlocked USMC defence contracts and a partnership with Trimble, the world's largest surveying platform.

The Real Problem

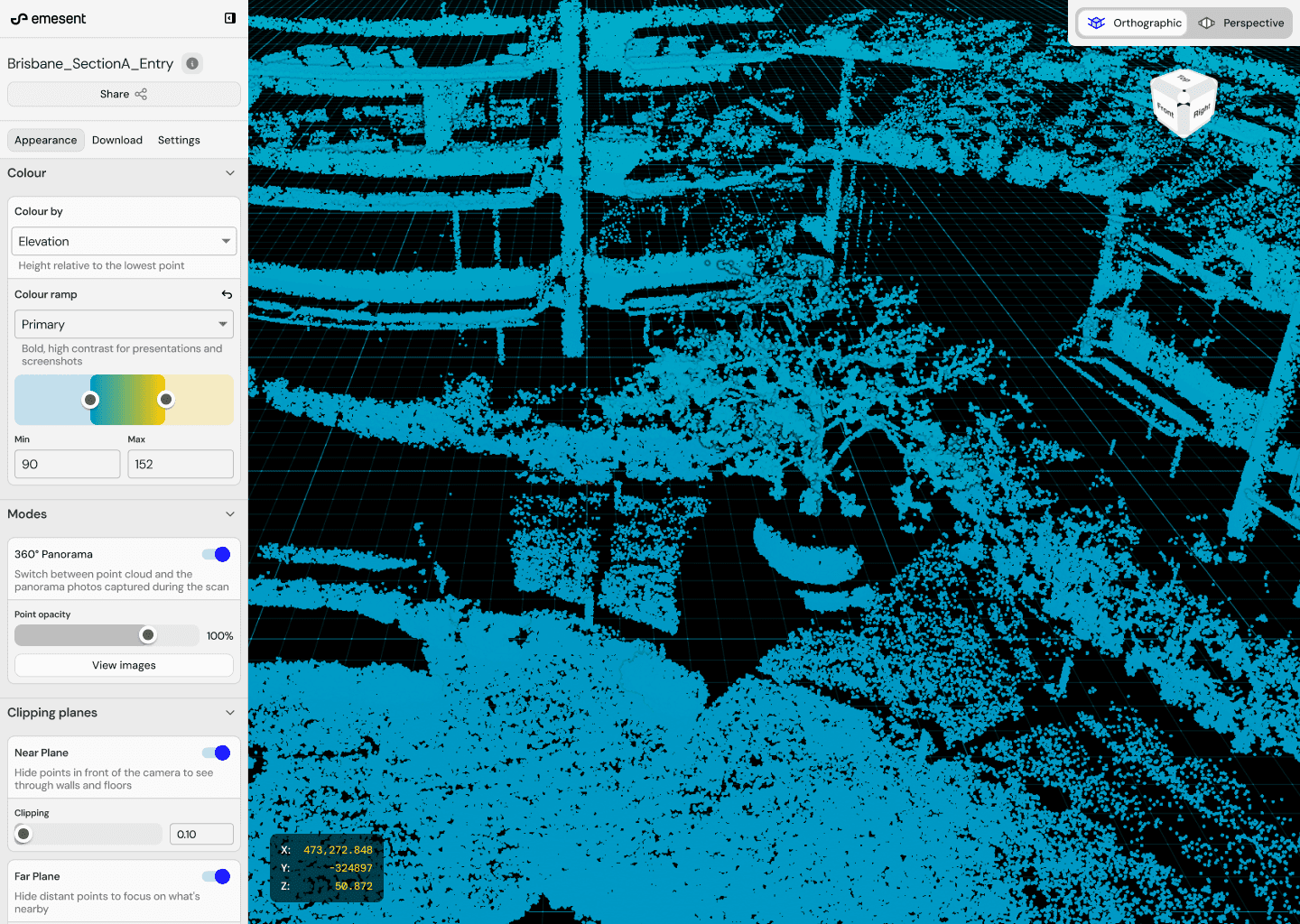

When I joined Emesent as their first product design lead on Omnimap (cloud platform), I found nine structural problems. No designer had touched the product. Engineering had purchased MUI Components Library and was using it out of the box — the product looked generic, at odds with hardware carrying a premium price point. There was no user research operations: no recruitment, no panels, no structured synthesis. Feedback came primarily from resellers and positive-feedback-only channels.

The result was a product that took users 12 months to reach comfort, backed by a 122-page user manual that even internal staff described as 'too loud, confusing, complex.' Surveyors were squinting at 8-9px fonts. Backend services were tightly coupled, making releases extraordinarily slow. And I had a June 1st public beta deadline with two designers and no design work completed.

We couldn't figure out how to scale it to train people to use it. Not intuitive enough. Millions of dollars of equipment and labour so it needs to be streamlined.

Tesla

- No design leadership on Omnimap — I was the first designer assigned to the cloud platform

- No design system — MUI out of the box with no visual differentiation from generic defaults

- No user research operations — no recruitment, no panels, no structured synthesis

- 12-month learning curve for typical users, substantiated by direct quotes across 26 companies

- 122-page user manual for the cloud product — a symptom, not a solution

- Engineering-driven UI decisions creating 8-9px fonts that surveyors with glasses couldn't read

- Backend services tightly coupled — iterating on releases was extraordinarily slow

- Two designers against a June 1st public beta deadline with no design work completed

- Revenue targets increasing from $20M to $50M with no design capacity plan

Building Research from Nothing

My first move was to synthesise 3,000+ existing Productboard insights that nobody had structured. From this I created 5 customer mindsets — a useful middle ground between individual anecdotes and broad use cases. Instead of 'this specific customer has an issue' or 'this use case won't fit,' I could now say 'Mindset A and B will have an issue with this feature, but C, D, and F may not.'

I built the full research program: first session was an intro call where users walked through their end-to-end workflow plus discovery questions to determine their mindset. Second session was targeted mission-based testing with a prototype link. Around 5 users per round, 30 customers interviewed total, insights synthesised after each round against mindsets. A UX risk scoring framework determined whether further rounds were needed.

My biggest mistake was waiting for permission. I followed the expected path — requesting customer access through sales, account managers, and engineering managers. Weeks passed while I waited for introductions, approvals, and scheduling. The June 1st deadline didn't move. The problem wasn't that people were obstructing — it was that they weren't aligned to the same outcomes or the same sense of urgency. Asking permission became the bottleneck, not the research itself.

Once I recognised this, I stopped waiting. I started guerrilla testing on the street with an iPad — barbers, grocery workers, tradespeople, restaurant owners — validating whether designs were genuinely intuitive to someone who had never seen a point cloud. I posted in Reddit communities for anonymous early feedback. I built alternative channels that didn't depend on the organisational permission chain. The formal research pipeline eventually came together, but the early momentum came from refusing to let alignment gaps stall the work.

With only two designers and no budget to expand, I trained sales teams across Americas and Europe on research methodology — scaling capacity across the organisation. Engineers began attending customer interviews, seeing firsthand how users struggled. They started coming out of sessions with ideas about how to solve problems differently. That was the biggest win for technical stakeholder alignment.

- 3,000+ Productboard insights synthesised into 5 customer mindsets

- 15-person research panel representing multiple mindsets established for consistent testing

- UX risk scoring framework: high risk + high value proceeds to next round, low risk + high value shifts to surveys

- Trained sales teams in Americas and Europe on what good vs. bad research questions look like

- Engineers began attending customer interviews — became the biggest success in winning over technical stakeholders

- Guerrilla testing validated with non-experts on the street: the solution born from refusing to wait for the formal pipeline

- Reddit communities for anonymous early feedback before formal customer access was established

The Hardest Problem: Making Photoshop Simple

The core design challenge was equivalent to making Photoshop usable for someone who has never touched professional software. Users wanted deeply technical features — stope mapping, convergence monitoring, cross-section analysis — but their baseline software experience was consumer apps. Instagram, social media, phones. Many had never used complex enterprise software before Emesent's products.

The 12-month learning curve was a direct consequence of this gap, substantiated across 26 companies: 'It has taken 18 months to get this far with learning' (General Motors). 'Took me a yr to get there... I'm probably the only person in my company that knows how to use it' (UAS). No one had a clear idea how to solve it. Not me, not the team, not the customers.

It has taken 18 months to get this far with learning.

General Motors

Does not understand half the settings — tends to leave a lot on default and is scared to change parameters.

Interpine

- Contextual action relevance — only surface actions relevant to the user's current task. Don't make me think, show me my pathway forward

- Progressive step reduction — each step should make the next step feel effortless and obvious

- Goal: make the 122-page user manual unnecessary. Users should never need documentation

- Testing with non-experts for the public share viewer: restaurant owners and tradespeople navigating point cloud data validated whether the design was genuinely intuitive

- Rapid iteration cycles: concept to testable prototype to data to iteration, compressed from weeks to hours

Breaking Out of Figma

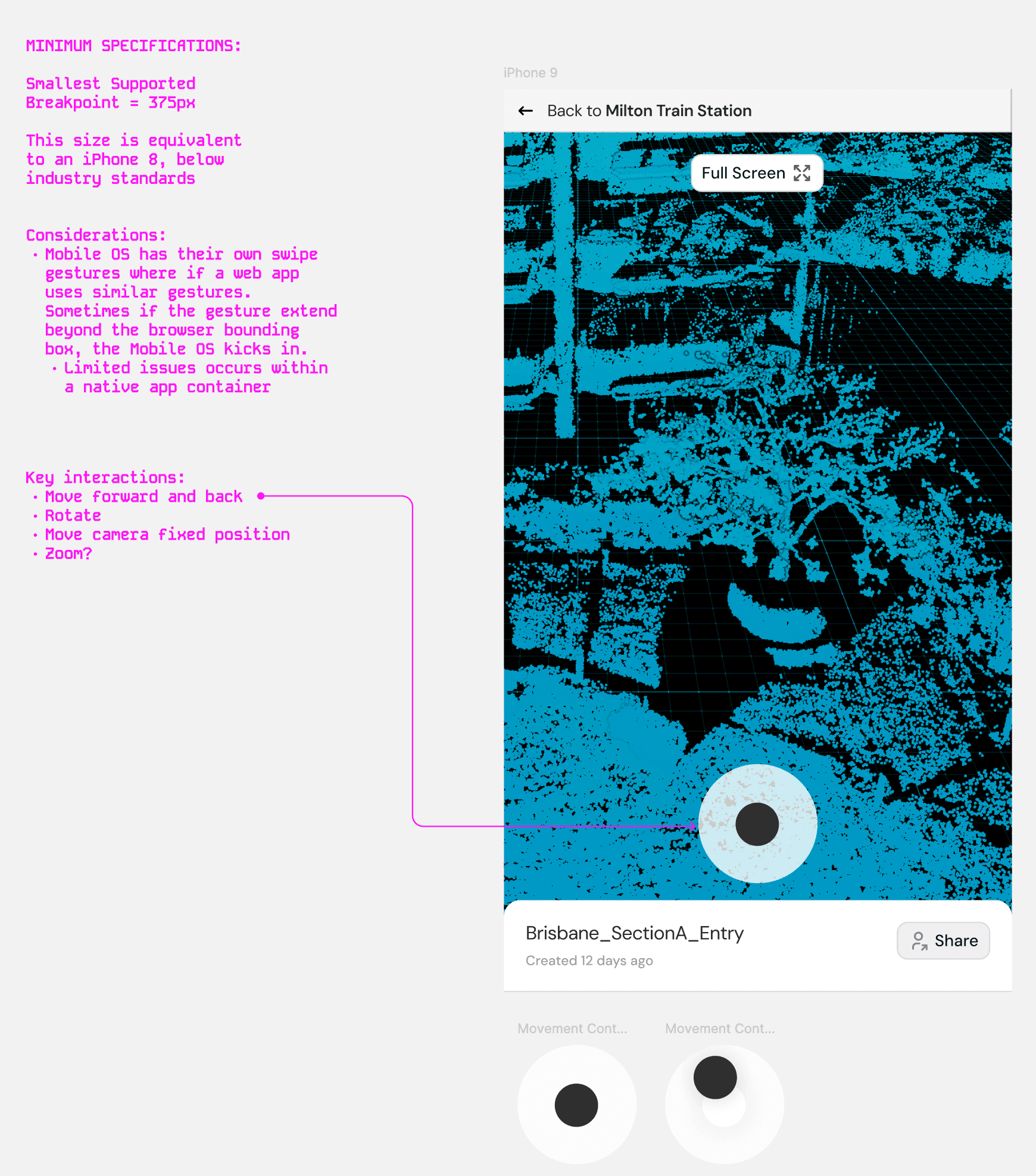

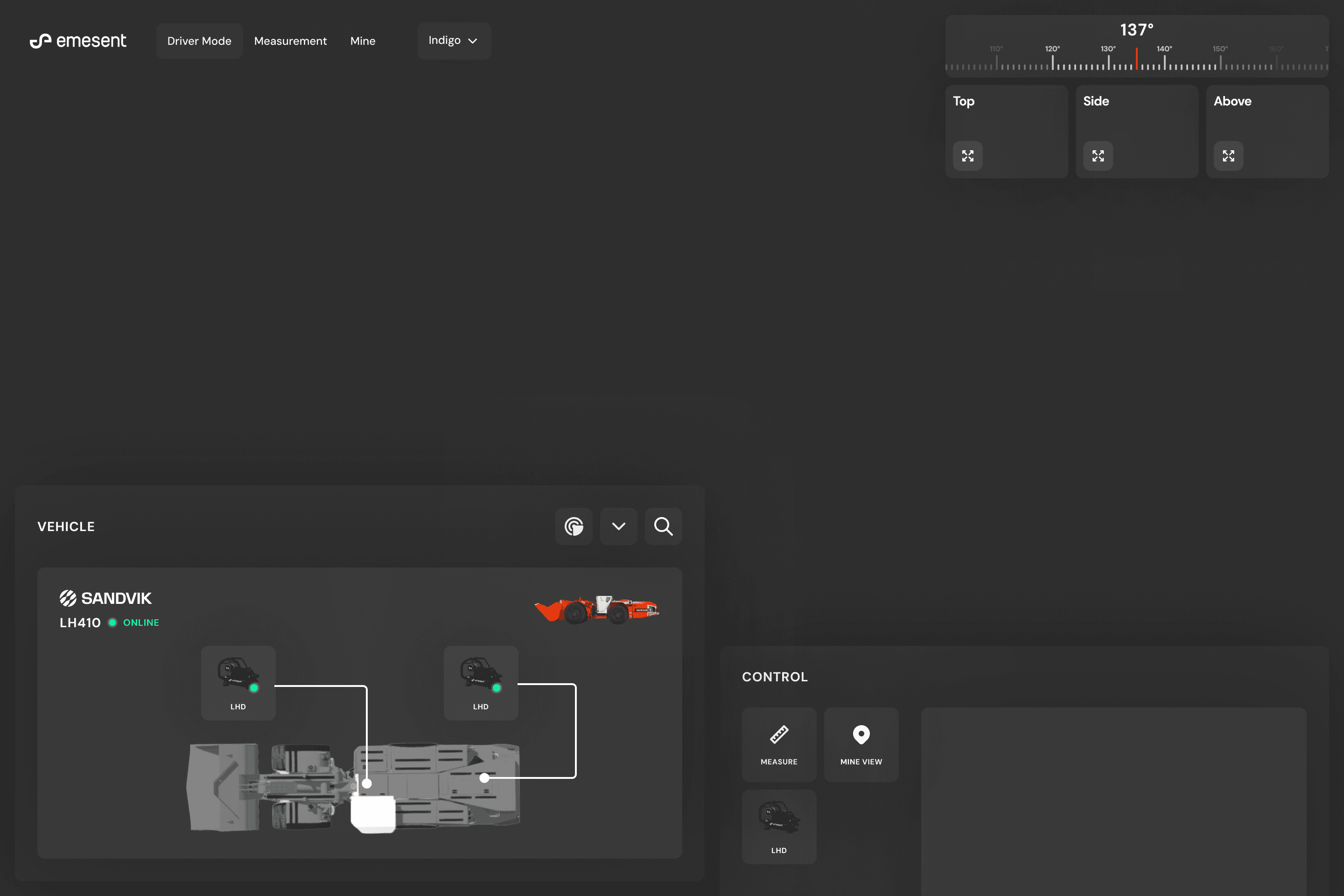

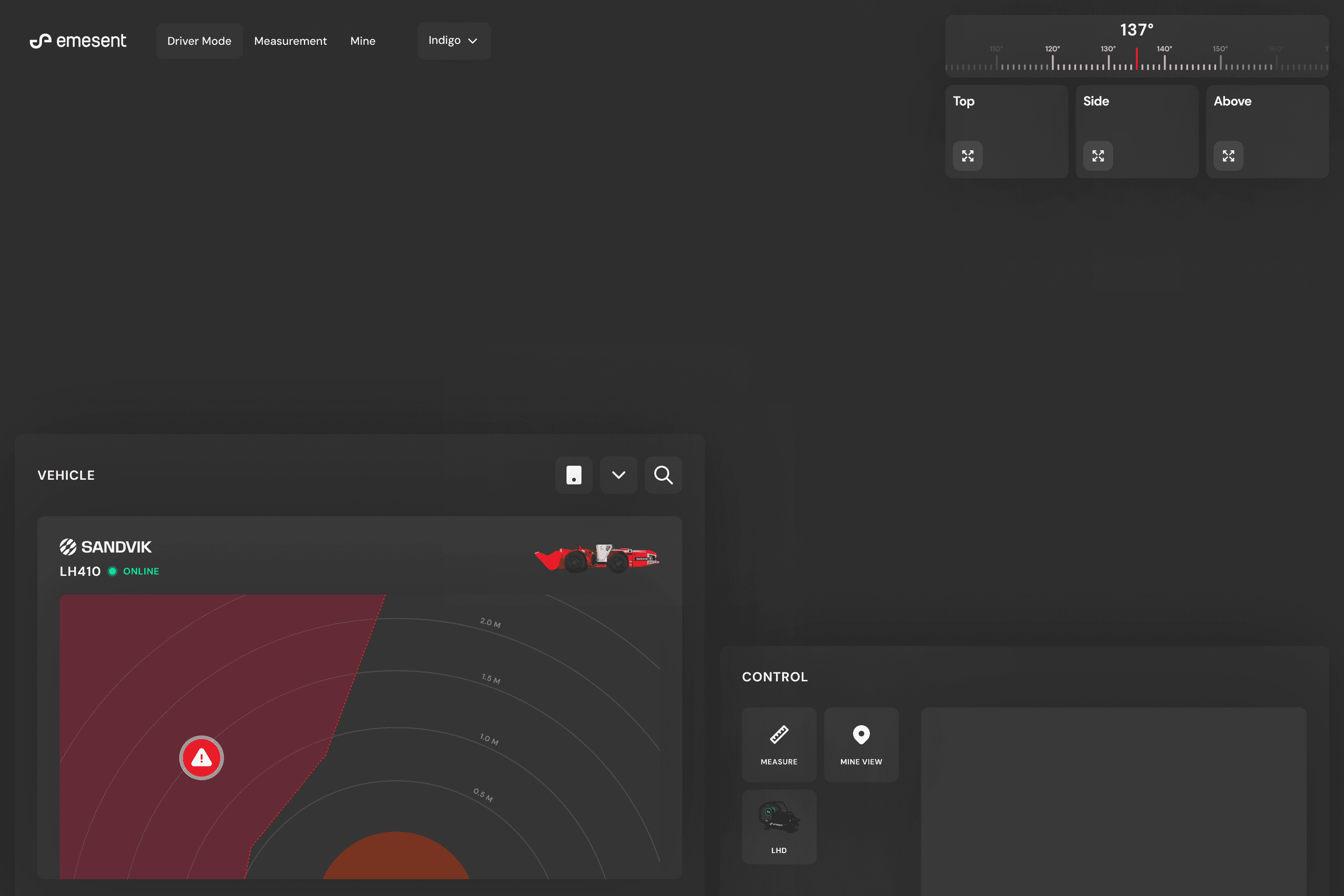

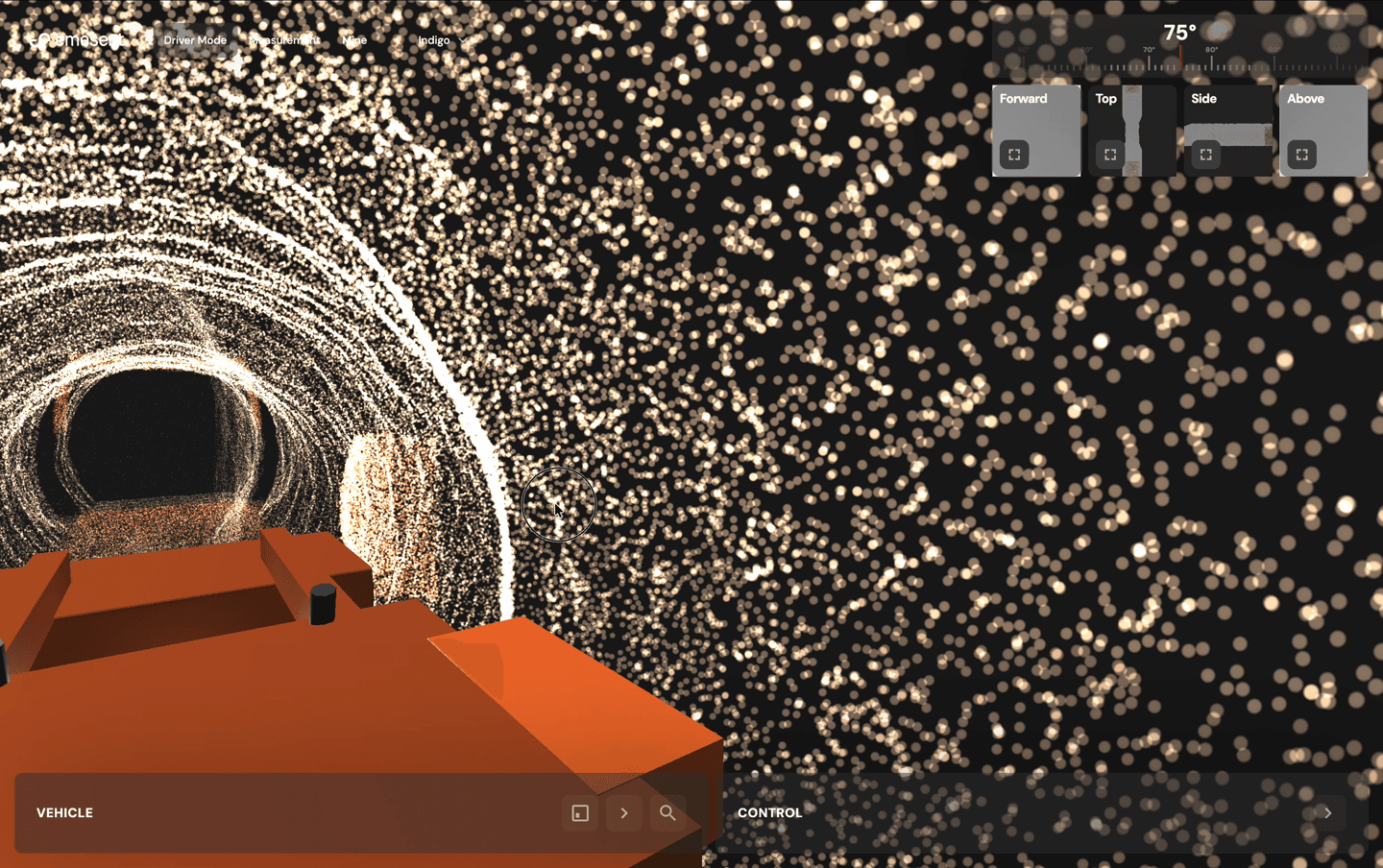

I started designing the LHD teleoperation interface in Figma but hit a fundamental limitation. The problem we were solving was the interaction itself — what happens in a high-stress moment when a bogger operator takes all hands off the vehicle controls to assess a hazard. A Figma prototype would show someone clicking through screens, not whether they could orient themselves in 3D space quickly enough, whether proximity warnings were legible at a glance, or whether camera switching was intuitive under cognitive load.

For any design problem where the value is in the interaction — not the layout, not the visual design, but the moment-to-moment experience — static prototyping tools create a false sense of validation. I designed one or two screens in Figma for visual direction, fed them along with user context and the full operator workflow into Claude Code, refined in Cursor, and had a testable interactive prototype in four hours. By end of day I had substantive data to iterate from.

- Figma for visual direction — look and feel, layout structure, colour system

- Claude Code for initial prototype build (~60% quality from context + wireframes)

- Cursor for refinements and polish

- Total time from concept to testable interactive 3D prototype: ~4 hours

- Same approach used across LHD Commander, Omnimap mobile viewer, and Trimble integration prototype

LHD: Designing for Safety Underground

Load-Haul-Dump vehicles — 52-tonne boggers — are the workhorses of underground mining. Experienced operators who spent years underground are retiring, taking irreplaceable intuitive knowledge with them: puffs of dust signalling instability, the sound of rock movement, visual cues about unsafe formations. New teleoperators work from surface huts with grayscale, pixelated CCTV feeds. No depth perception. No 3D spatial awareness. No way to assess hazards that an experienced operator would have felt, heard, or seen in person.

The consequences are real: multi-million dollar equipment losses, loader burials averaging two per year across Barminco's 20 sites, and fatalities from operators unable to see stope edges. Barminco, one of the world's largest underground mining contractors, approached Emesent to co-develop a purpose-built solution.

Remote Bogger Operators have 4-5 cameras on the Bogger to look at, but visibility is poor. Boggers can get damaged or even buried by poor operator decisions.

Mining Plus

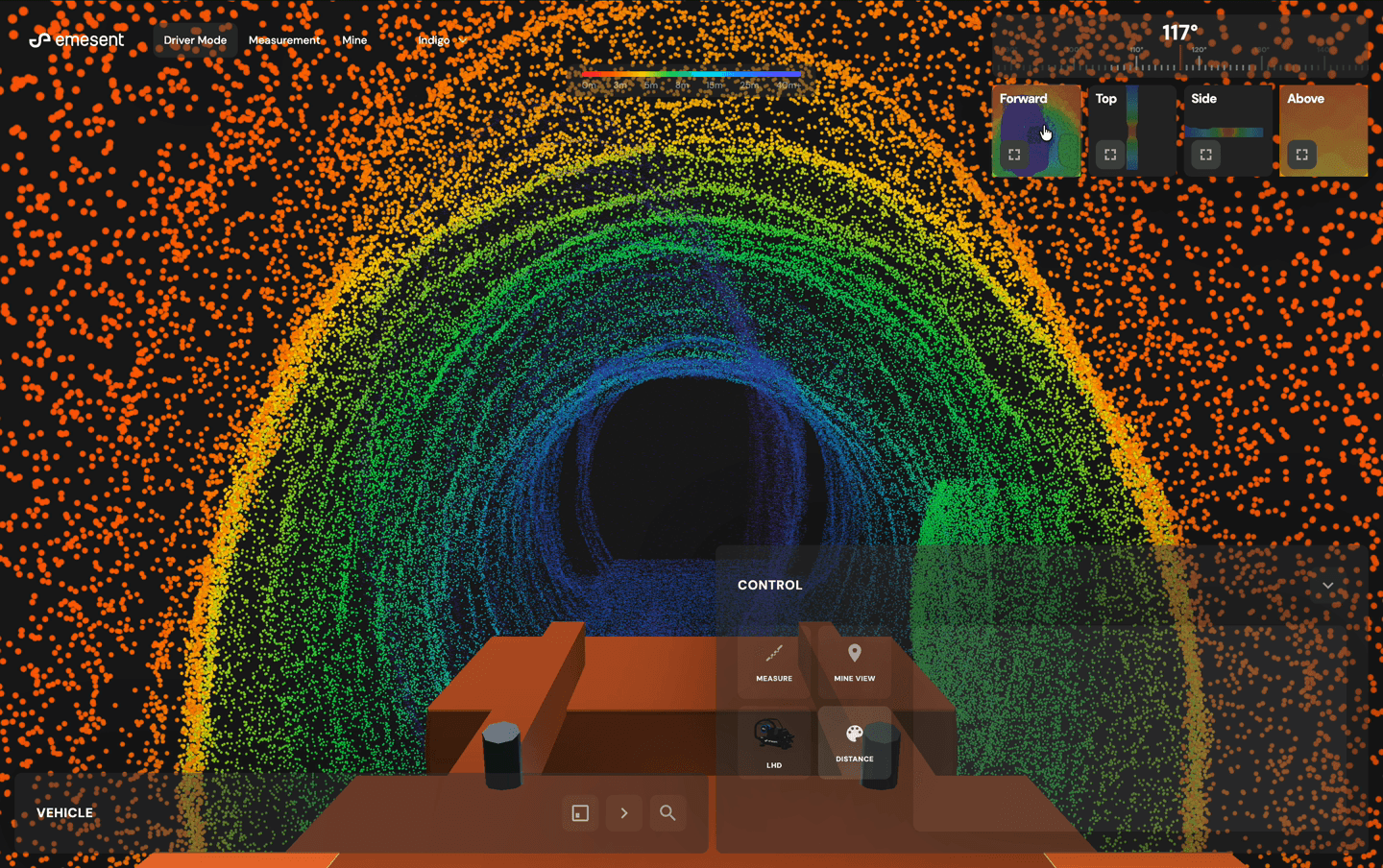

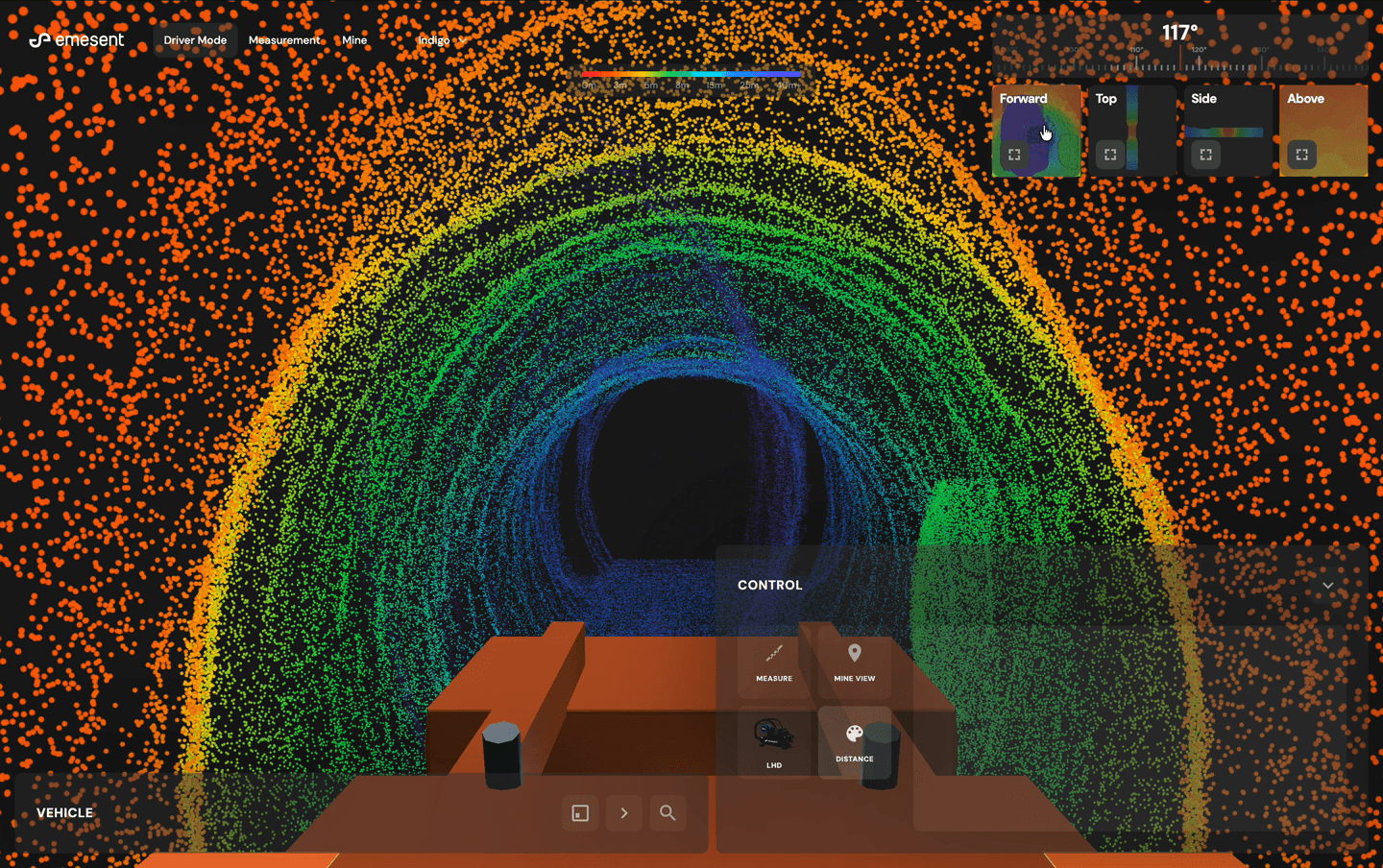

I designed and built a 3D web prototype where a Hovermap LiDAR sensor mounts on the bogger, continuously scanning the environment. Operators can measure distances, inspect intrusions, switch between camera views, and see distance colouring that instantly shows what's close versus safe. When uncertain, they call a supervisor who logs into the same interface — making informed decisions based on actual 3D spatial data rather than a voice description of a pixelated camera feed.

- Interactive 3D web prototype using Three.js with ~567,000 procedurally-generated points

- Real-time proximity detection in 5 directions with red hazard alerts below safety thresholds

- Multi-camera system: FPV (driver's perspective), top-down, side, above, and 360-degree orbital mine view

- Distance colouring mode mapping danger zones (red) through to safe distances (cyan/purple)

- Supervisor collaboration: same spatial view shared across remote sites for informed decision-making

- Built in ~4 hours using vibe-coded approach, directly to customer validation

Research at Scale: 3,364 Customer Data Points

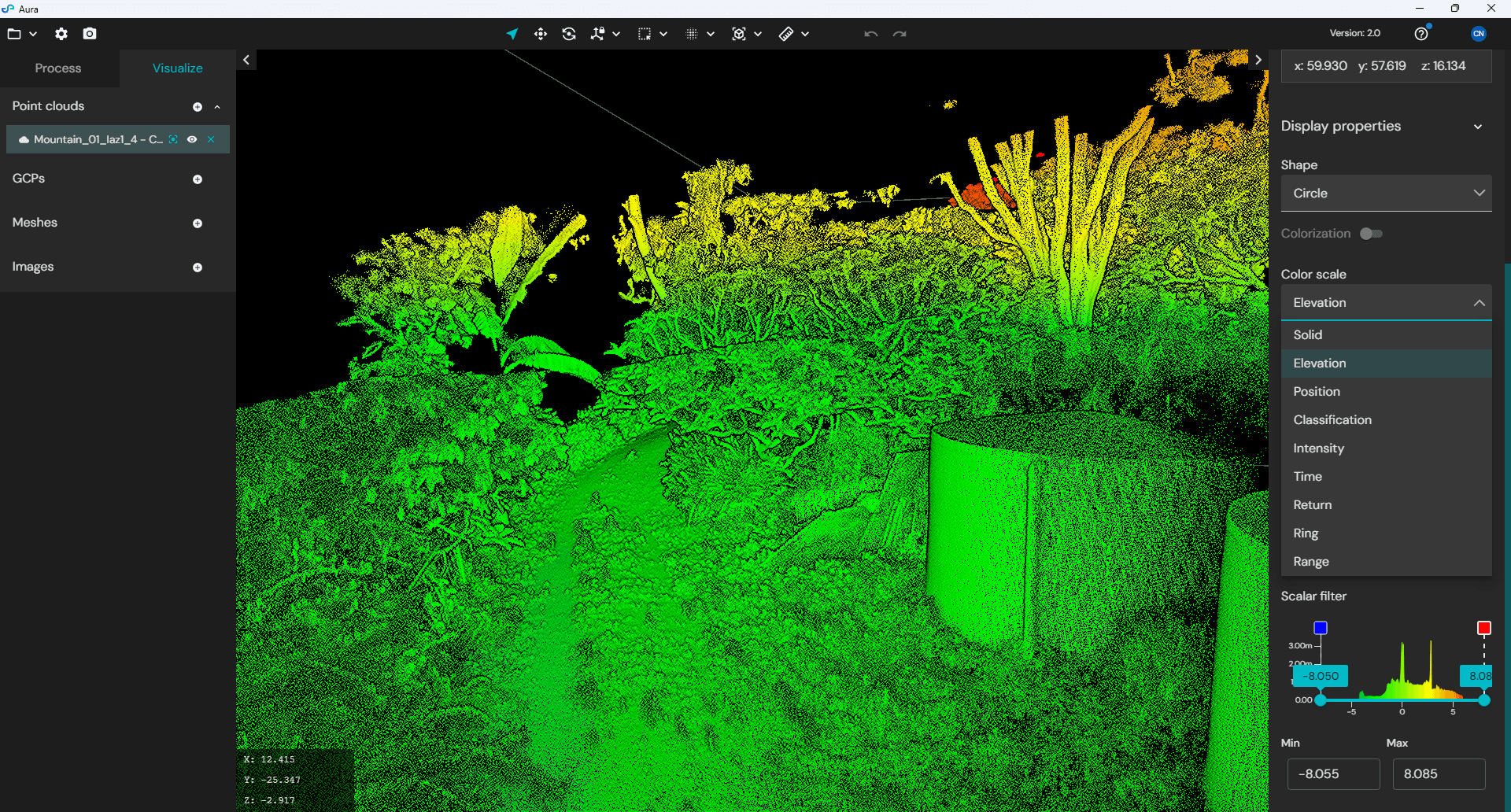

I built an AI-powered customer insights system that made 3,364 Productboard insights across 266 companies queryable by anyone on the product team. Product managers could ask questions and receive synthesised answers with specific user quotes, mindset attribution, and quantitative backing. I created custom instruction sets per team member to ensure research quality — linking queries to specific mindsets, users within those mindsets, their problems, and direct quotes.

The data revealed patterns invisible to anecdotal feedback. Measurement was the single biggest reason users left the ecosystem mid-workflow: 1,745 insights across 162 companies showed users actively defecting to CloudCompare (545 mentions, 61 companies) because basic measurement was failing. First-time users weren't overwhelmed — they were blinded by opacity: hidden toolbars, opaque settings, no guided next step. The sharing problem wasn't delivering files — it was that recipients landed on raw data they couldn't decode. 'No clients have wanted the point cloud yet' was the canonical quote. Surveyors were spending 6+ hours per week translating point cloud data for clients. One customer built their own platform because native sharing was insufficient.

No clients have wanted the point cloud yet.

Prairies Edge

6 hours of meetings every week with clients to discuss what they want to see in the output.

Aerodyne

- 3,364 Productboard insights synthesised across 266 companies

- 545 CloudCompare defection mentions across 61 companies — measurement was the #1 abandonment trigger

- 1,544 crash/fail/rerun mentions across 136 companies with 588 rage-clicking instances

- First-time problem was opacity not overload: users couldn't find controls, not that there were too many

- Custom instruction sets per team member linking queries to mindsets, users, problems, and quotes

- Viewport sharing research (1,471 unique notes, 50+ companies) reframed an engineering ticket from 'encode camera position' to 'share the insight, not the data'

- Training cost: $3,500/day — substantiating the business case to eliminate the learning curve through design

Results

This work is ongoing. The research foundation and prototype-first approach have already delivered strategic outcomes — defence contracts, a Trimble partnership, and a fundamentally different decision-making process across the product team. Longitudinal metrics on learning curve reduction, support volume, and user retention are being tracked against the public beta launched in mid-2025.

The machine-based licensing design for Aura — moving from subscription to completely offline licensing — directly unlocked Emesent's ability to deliver on defence contracts in air-gapped environments. The Trimble integration prototype, an operating-system-style clickable demo with mock coded implementations, enabled senior executives to finalise a strategic partnership with the world's largest surveying platform. The user research program fundamentally changed how the product team made decisions, moving from anecdotal feedback to structured evidence across 266 companies.

- Machine-based licensing design directly unlocked multi-million dollar USMC and global defence contracts

- Trimble partnership finalised through an OS-style prototype enabling C-level partnership decisions

- Prototype-to-validation cycle compressed from weeks to 4 hours through code-first approach

- LHD safety prototype built and validated for Barminco co-development partnership

- 3,000+ data points synthesised into AI system used daily by product, sales, and engineering teams

- 30 customers interviewed across multiple mindsets with 15-person research panel established

- Research methodology adopted by sales teams across Americas and Europe, scaling beyond the 2-person design team

- Engineers attending customer interviews for the first time, leading to better solutions informed by real behaviour

What I Learned

Speed of iteration is the single greatest leverage point for design quality. AI tools have made that speed phenomenal — what previously took weeks of Figma screens, stakeholder reviews, and developer handoffs now takes hours from concept to testable prototype. In one instance, my engineer gave me access to connect directly into the codebase. I was vibe-coding design ideas against real services, real data, and real architecture, pushing to a sandbox environment with production-grade fidelity.

AI can help you with the iterations, but it can't help you with the research.

But speed without research direction is just faster guessing. 80% of human behaviour is non-communicative — impossible for AI to understand. The combination of both — rapid code prototyping with structured user research programs, mindset frameworks, UX risk scoring, and unbiased testing methodology — is what produces outsized outcomes. My process didn't change at Emesent. What changed was how fast I could execute it.