Strike Analytics: Building an AI Product from 0 to 1

2023 - 2025

Co-founded an AI analytics platform addressing the $1.3 trillion lost annually to inefficient data analytics. As player-coach, I owned everything from ML architecture and product design through to go-to-market, building and managing a team of 11 across Sydney, Singapore, Cairo, and Berlin.

The Real Problem

I kept seeing the same problem everywhere: companies were drowning in data but starving for insights. The data analytics space is incredibly fragmented, user interviews lived in Notion, marketing performance was in Google Analytics, support conversations were in Intercom, and sales data was in HubSpot. Nobody could see the connections.

- Siloed data sources, qualitative and quantitative data lived in completely separate systems with no way to cross-compare

- Time-intensive analysis, teams spent entire days manually correlating different data types. By the time they found insights, market conditions had changed

- Human interpretation bottleneck, even when patterns emerged, translating them into actionable decisions required extensive meetings and debates

- Scale vs speed trade-off, companies could either analyse thoroughly (taking weeks) or quickly (missing crucial context). There was no middle ground

Context

After conducting interviews with over 50 people across 20+ cities globally, the challenge crystallised: bridging the gap between what customers say and what they actually do. Most analytics tools could tell you the 'what' but completely missed the 'why' behind user behaviour. I developed our '10 inches deep vs 10 inches wide' approach, focusing deeply on specific data types before expanding to related areas.

The ML Framework

Working with our data science team, I architected a system combining multiple machine learning approaches. The key insight was using these models together rather than in isolation, each algorithm validated and enriched the others' findings.

- Topic Modelling to identify patterns in customer conversations and support tickets

- ARIMA models for time-series forecasting of engagement and retention metrics

- Logistic Regression to predict conversion likelihood based on multi-variable inputs

- Sentiment Analysis to quantify emotional responses from qualitative feedback

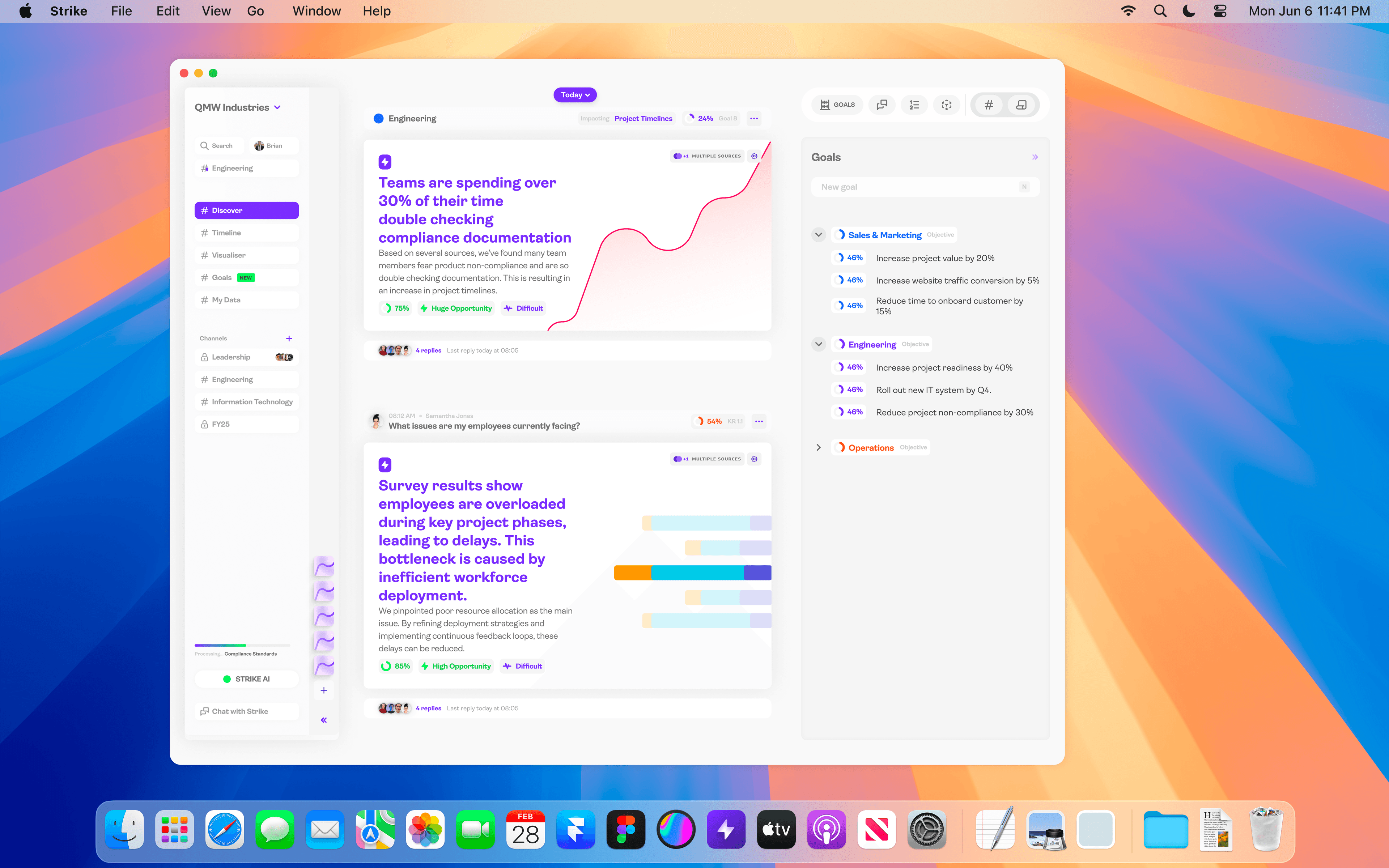

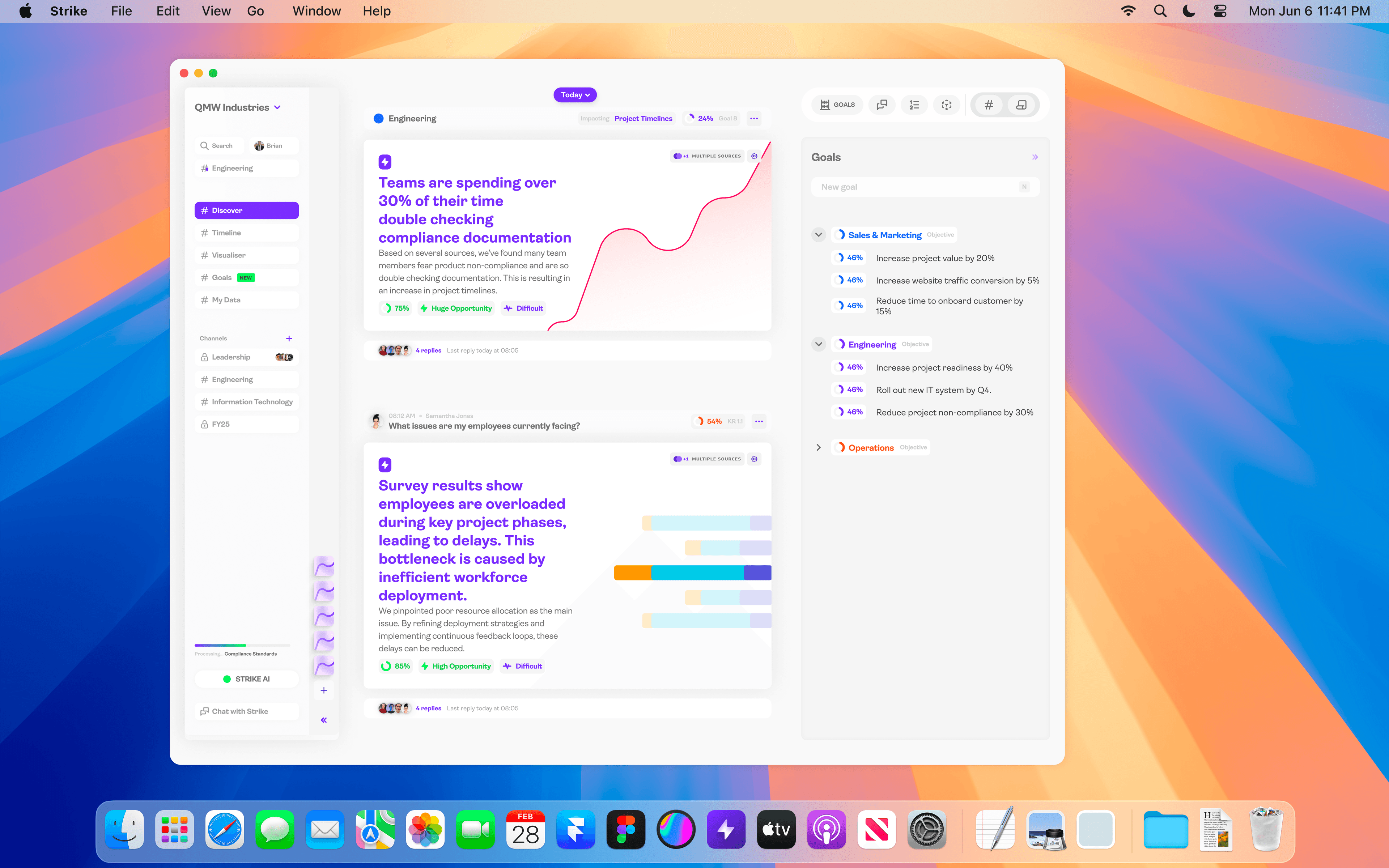

Designing AI That Humans Could Trust

AI analytics requires a completely different design approach than traditional dashboards. You're not just showing data, you're making recommendations that affect business strategy and resource allocation. Every algorithmic decision had to be explainable. We couldn't just tell users what to do; we had to show them why our AI reached those conclusions. I designed a progressive disclosure interface that surfaced key insights immediately while allowing users to drill into the reasoning behind each recommendation.

The Insight-to-Action Pivot

We instrumented everything to understand how teams actually used our insights. The data revealed something critical: people would look at our analysis, say 'that's interesting,' then do nothing. This led to our biggest strategic shift, but it wasn't a single pivot. It was 13 iterative pivots over 14 months, each driven by real market signals rather than assumptions. We operated in weekly iteration cycles, often shipping changes within days of discovering a new insight.

- Users stalled on blank chat screens with no idea what to ask. Pivoted to pre-defined insight stories aligned to each user's role and responsibilities

- Leaders didn't want to use a product themselves. They wanted insights brought to them. Shifted focus from leaders to the product managers and designers who actually work with data

- Marketing data surfaced the most actionable insights. Expanded scope from product teams to cross-functional marketing teams

- Customers without product functions relied on full-service agencies. Agencies were terrified AI would replace them. Pivoted to serve agencies directly

- Agencies used 10+ different tools and resisted consolidation. Narrowed focus to eCommerce businesses with dedicated small teams managing their own data

- eCommerce customers were more data-driven than enterprise but struggled with two things: discovering insights and actioning them. Pivoted from analytics to automation

- Discovered that actioning data in real-time at AI processing speed could craft entirely unique web experiences per user. This became the final product direction

Leading a Distributed Team

Managing a team of 11 across Sydney, Singapore, Cairo, and Berlin required new approaches to alignment and communication. I developed a storytelling-based methodology where every technical decision connected back to our core mission of making data accessible to anyone. Daily video updates maintained alignment across time zones, and I made sure everyone understood not just what we were building, but why each feature mattered for our users' success.

Ensuring Model Reliability

To ensure our AI recommendations were reliable, I developed a benchmark system using historical data where we already knew the outcomes. This let us fine-tune our models against real business results rather than theoretical accuracy. We tested every new model version against these benchmarks before releasing updates, maintaining prediction accuracy while improving speed.

Results

The platform delivered measurable outcomes across product, growth, and operational metrics. We stretched $125K AUD from a projected 6 months of runway to 14 months through disciplined lean operations, building ML pipelines, workflow tooling, data infrastructure, and a custom admin platform, all while maintaining a $7K monthly burn rate.

- 96% reduction in insight generation time (from 24 hours to 1 hour)

- Business decision-making time cut from 3 hours to 5 minutes

- 8.55% click-through rate on messaging (6x industry benchmarks)

- 1.86% sign-up conversion rate without any acquisition costs

- 23% improvement in prediction accuracy for business decisions

- 40+ customer sign-ups across eCommerce, agencies, and SaaS

- 24 VC meetings secured and 4 accelerator programs completed from 1,289 targeted outreach emails

- $125K stretched to 14 months of operations (2.3x projected runway)

Pitching to Investors

I designed the complete investor pitch materials, translating complex product strategy and technical architecture into clear, compelling narratives. Each deck was crafted to communicate different facets of the business: competitive positioning, consumer segmentation, go-to-market strategy, and audience targeting.

What I Learned

Three lessons shaped everything I've built since. First, AI systems for business decisions need explainability more than perfection. Users will trust a 75% accurate system they understand over a 90% accurate black box. Second, the gap between insight and action is where most analytics tools fail. Building automation bridges directly into the insight discovery process eliminates this friction entirely. Third, and hardest: culture amongst leadership is the most difficult thing to change. If decision-makers don't believe in data-driven processes, no product can force adoption. The real product challenge isn't technical, it's organisational. By the time we found product-market fit in real-time eCommerce personalisation, our runway had ended. We shut down operations but the lessons compound in everything I build now.